On my mind

AI Paranoia: A Conspiracy of Incentives

AI Paranoia: A Conspiracy of Incentives

This is a story about Hacker News, AI paranoia, and incentives.

The first half of this post is mostly my paranoid brain chasing a nonexistent conspiracy theory about massive AI narrative manipulation. But chasing that ghost led me to internalize something much deeper about the morality and incentives of major AI labs.

To understand how I got there, you need two pieces of context: a recent viral Hacker News post, and an old lesson about corporate politics that only just clicked for me.

But first, a premise that lives rent-free in my head: Emulating humans on the internet is trivial nowadays. If an entity has the resources and the incentives to manipulate public discourse through human emulation, they probably will. If that sounds too far-fetched, you might want to stop reading here.

Story 1: Going Viral in HN (and Getting Paranoid)

About a month ago, I posted an blog titled I miss thinking hard on HN; it unexpectedly went viral. I watched the first hour or so of viral wave before going to sleep. The discussion was fascinating, but I immediately got the weird feeling that a significant chunk of the comments, maybe 10% to 30% were clearly AI-generated.

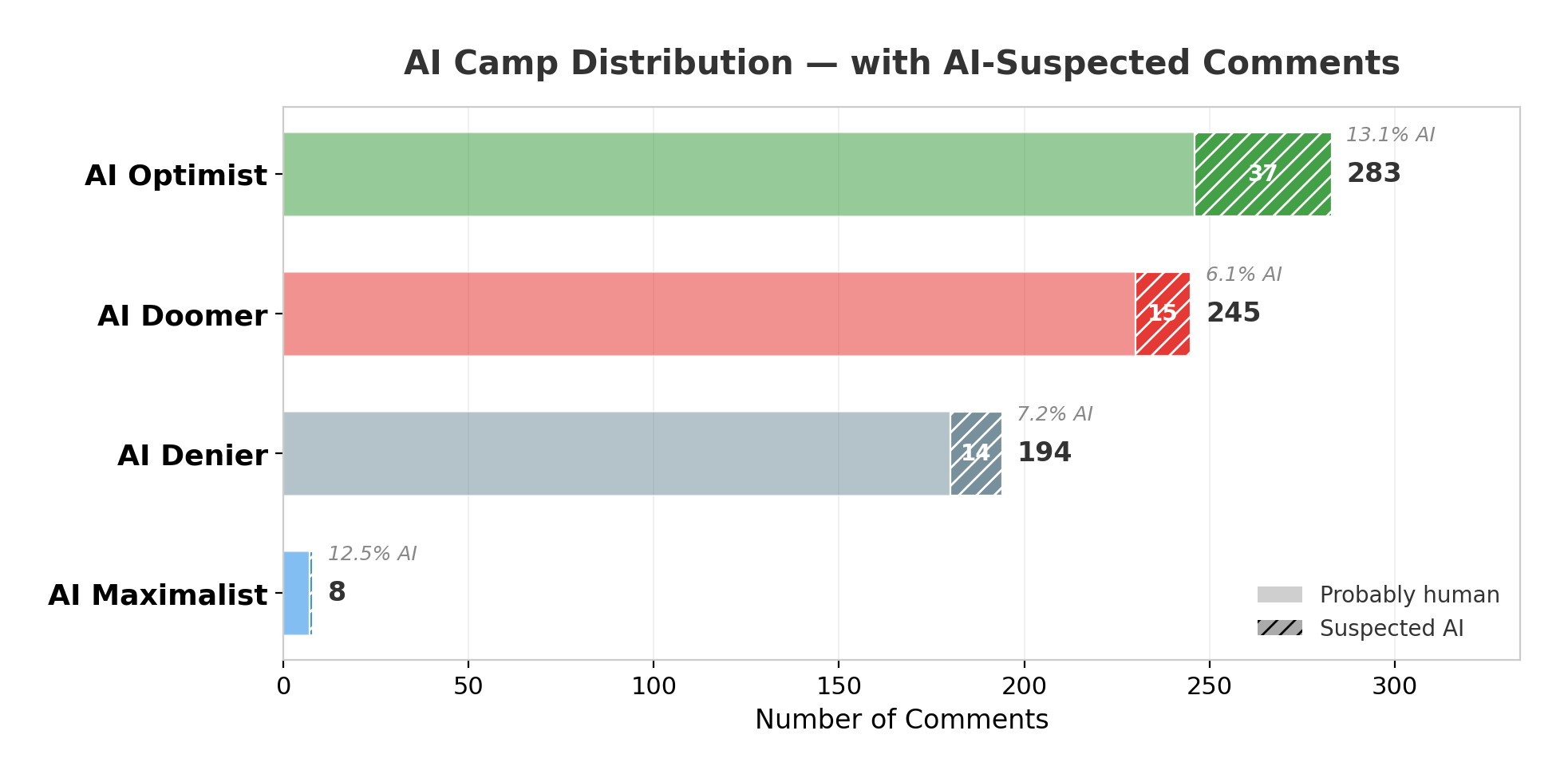

I woke up to almost 600 comments and decided to read them all to figure out why this seemingly simple post provoked such a massive reaction. Broadly speaking, the comments fell into four camps regarding the commentor perspective on AI:

- AI Optimists: AI is a tool that empowers us.

- AI Deniers: AI is just hype.

- AI Maximalists: AI will replace us, and that’s a good thing.

- AI Doomers: AI will replace us, and that’s a bad thing.

As expected, the first camp (AI Optimists) was the most common. I was a firm “Type 1” myself for the longest time. The comments from this group generally echoed a similar sentiment:

AI tools actually empower individuals. Now we can spend our time thinking about important stuff like architecture and creativity, and let AI do the boring tasks.

Variations of this exact thought were the most common response to my post. It was expected. But the weird part was that, as I read through the thread, I started noticing a disproportionately high ratio of “clearly AI-generated” replies pushing this specific idea.

The Intrusive Thought

Let’s not rush; I am probably just biased or paranoid. I needed to get technical. I pulled and parsed all the comments and had four helpful friends, representing each of the four AI camps, rate them on a “probability of being AI” scale. I also ran them through GPT-5.2, Opus 4.6, and Gemini 3.

Long story short: nothing conclusive came out of it. Distinguishing an AI post from an average Hacker News comment is practically impossible.

|

|---|

I was expecting to find a massive bot farm pushing the “AI is just a tool” narrative, at least a 5x proportion of AI comments for the Optimist camp. Instead, I only saw a 2x increase. The data didn’t back it up. I had been paranoid all along.

What?...; You want to see the raw data to find out what makes a comment flag as AI-generated?

You'd love to know that, wouldn't you, major AI lab reading this?

Welcome to vibe statistics.

I had spent two days chasing a false conspiracy theory. But in that moment of reflection, an old memory came to me.

Story 2: Tools Have Incentives

Some years ago, I worked as an ML engineer at a large corporation. I’ll keep the details intentionally vague, but my team was tasked with building a system to “improve the efficiency” of a group of workers I’ll refer to here as back-office specialists.

Right off the bat there was a silent truth we all knew: the long term plan was to cut the specialists all together. No one said it out loud, but us (as in the ML team) and the Directors knew that “improving efficiency” was just corporate speak for eventual automation.

The problem was that we knew next to nothing about their work. To build the system, we needed the team of specialists to explain their workflows, map out their mental models, and of course, to make them verify that our models were working at all.

How do you get people to help you replace them?

Simple: you start by building things that are genuinely useful to them.

We built tools. The specialists used them, gave us feedback, and told us what to build next. There was no evil mastermind or explicit multi-year replacement strategy. It was just the natural flow of the project. But the incentive structure was obvious.

Naturally, the specialists could feel the underlying intentions and were reluctant to cooperate at first. But the directors shared the same incentives as us, so the tools stayed and the feedback loop of improvement continued.

I eventually switched projects and never actually saw how things evolved, but the realization stuck with me:

If you want to replace someone, you need them to succeed first. Giving them tools that actually help is the best to slowly but surely extract their mental processes.

The Lesson: They Stole Our Code, Now They Want Our Brains

Maybe my paranoia had some foundation after all. Thinking about the incentives behind “AI tools” introduces moral questions beyond the usual environmental impacts and privacy concerns.

Big AI labs have a massive incentive to play both sides. When pitching to investors and stakeholders, AI is “a human replacement” and the ultimate solution for all current and future problems. But when talking to you, the very person whose creativity and mental process they need to extract, AI is presented as “just a tool that will help you do more, faster.”

These companies have already made billions off the code and words we freely poured into the internet with the best of intentions. Not mentioning the copyrighted material they illegally stole. Now, they need our ongoing workflows and mental processes. And they are slowly distilling them to be repackaged and sold for profit.

I’m not saying AI is inherently evil or that you should stop using it. But we need to be conscious of the real incentives at play, incentives designed to make us play the game exactly how they want, even if we never signed up for it.